this post was submitted on 07 Mar 2025

274 points (97.6% liked)

Buy European

2191 readers

3528 users here now

Overview:

The community to discuss buying European goods and services.

Related Communities:

Buy Local:

Buying and Selling:

Boycott:

Banner credits: BYTEAlliance

founded 1 month ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

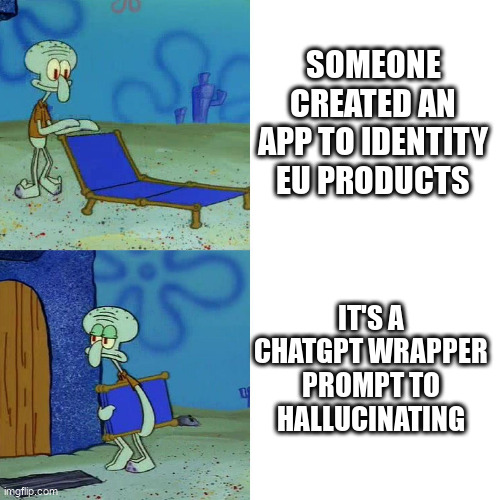

Devs are aware. This was a quick n dirty prototype and they alright knew the issue with using chatgpt. They did it to make something work asap. In an interview (Danish) the devs recognized this and is moving toward using a LLM developed in French (I forget the name but irrelevant to the point that they will drop chatgpt).

If that's their solution, then they have absolutely no understanding of the systems they're using.

ChatGPT isn't prone to hallucination because it's ChatGPT, it's prone because it's an LLM. That's a fundamental problem common to all LLMs

Plus I don't want some random ass server to crunch through couple hundred watt hours if scanning the barcode and running that against a database would not just suffice but also be more accurate.

More accurate, efficient, environmentally friendly. Why are we trying to solve all of this with LLMs?

Its easier to program I suppose, just setup a prompt and give back the result of that

Exactly, developers can't just come up with a complete database of all products in existence and where they come from, whereas LLMs are already trained on basically all data that is available on the Internet, with additional capabilities to browse the web if necessary. This is a reasonable approach.

phi-4 is the only one I am aware of that was deliberately trained to refuse instead of hallucinating. it's mindblowing to me that that isn't standard. everyone is trying to maximize benchmarks at all cost.

I wonder if diffusion LLMs will be lower in hallucinations, since they inherently have error correction built into their inference process

Even that won't be truly effective. It's all marketing, at this point.

The problem of hallucination really is fundamental to the technology. If there's a way to prevent it, it won't be as simple as training it differently