Very similar to global warming. If government AI policy is to strengthen military, empire, zionism, and oligarchy then voters need to be miserable and have bigger issues in their lives and hatred towards trans hispanic immigrant pet eaters.

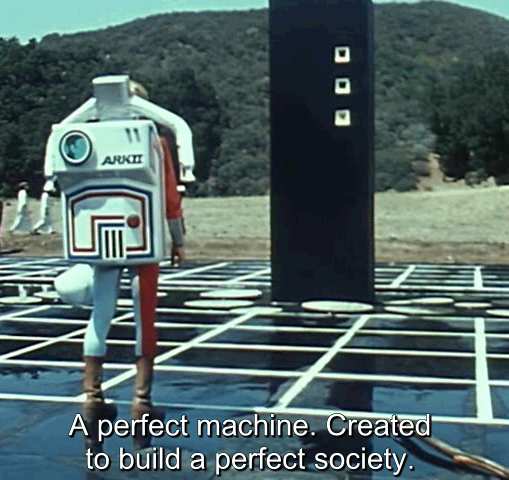

Skynet is awesome, and will be programmed for such supremacy. The same techbros who say polite things about UBI/freedom dividends/Universal high income are the ones vying to take all of our money to deliver skynet. If the slave class doesn't take political influence before skynet, then "power sharing with the slaves" through UBI is far less likely than genocide of the uppity classes.